MM DeepSnake: Deep Learning–Based Classification of Snake Species in Myanmar

Min Si Thu & Myo Htet Zaw — Version 2 (YOLOv26) — 2025

1. Abstract

MM DeepSnake is a deep learning–based image classification system designed to identify 10 distinct snake species found in Myanmar. The project addresses a practical need for automated, accurate snake identification—supporting biodiversity research, wildlife conservation, and public safety in the region. Development has progressed through two major iterations: a VGG19 transfer-learning pipeline (v1) achieving approximately 62% Top-1 accuracy, and a YOLOv2m-cls classification model (v2) achieving 93.1% Top-1 and 99.7% Top-5 accuracy—a +31 percentage-point improvement.

2. Introduction

Myanmar is home to a rich diversity of snake species, many of which are venomous and pose significant risks to local communities. Accurate and rapid identification is critical yet challenging without expert knowledge. MM DeepSnake aims to democratize this capability through an accessible AI-powered tool, contributing to both conservation efforts and public health awareness.

The purpose of this project is to classify snake species for:

- acknowledging the ecological roles of snakes in Myanmar’s ecosystems;

- providing venom-type awareness for snake-bite triage and treatment.

Some of the target species are morphologically similar at the species level; therefore, genus-level grouping was adopted where necessary (e.g., Trimeresurus sp.).

3. Target Species

The model currently classifies 10 snake taxa found in Myanmar:

| # | Scientific Name | Common Name | Burmese Name |

|---|---|---|---|

| 1 | Trimeresurus sp. | Asian Palm Pit Vipers | မြွေစိမ်းမြီးခြောက် |

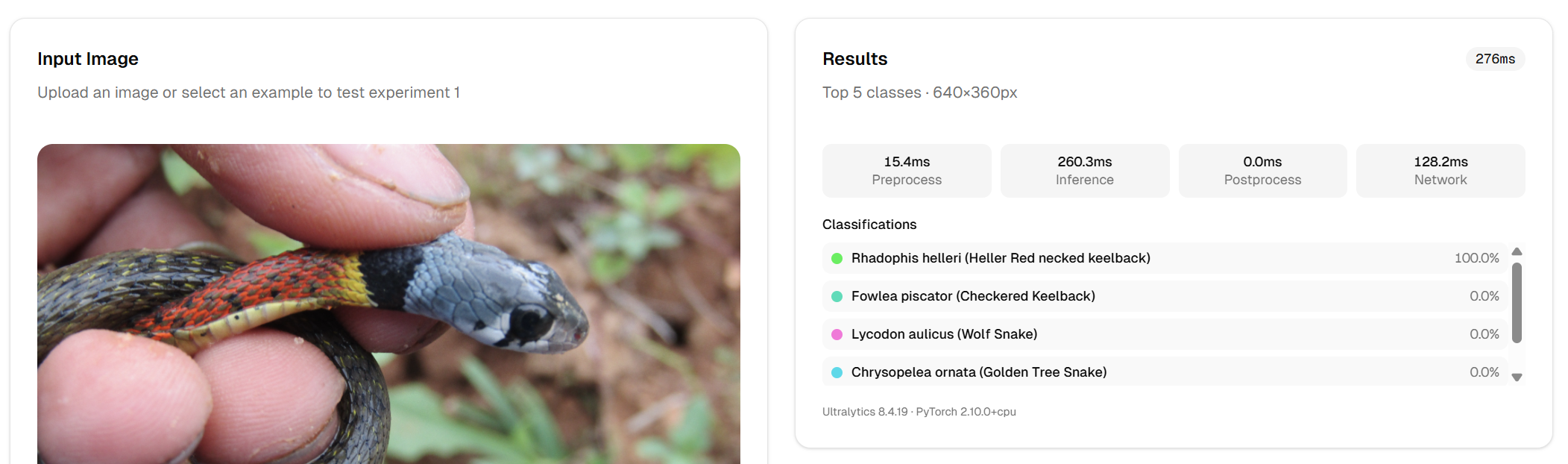

| 2 | Rhabdophis helleri | Heller’s Red-necked Keelback | လည်ပင်းနီမြွေ |

| 3 | Lycodon aulicus | Wolf Snake | မြွေဝံပုလွေ |

| 4 | Fowlea piscator | Checkered Keelback | ရေမြွေဗျောက်မ |

| 5 | Daboia siamensis | Eastern Russell’s Viper | မြွေပွေး |

| 6 | Chrysopelea ornata | Golden Tree Snake | ထန်းမြွေ |

| 7 | Bungarus fasciatus | Banded Krait | ငန်းတော်ကြား |

| 8 | Ophiophagus hannah | King Cobra | တောကြီးမြွေဟောက် |

| 9 | Laticauda colubrina | Sea Snake | ဂျပ်မြွေ |

| 10 | Naja kaouthia | Monocled Cobra | မြွေဟောက် |

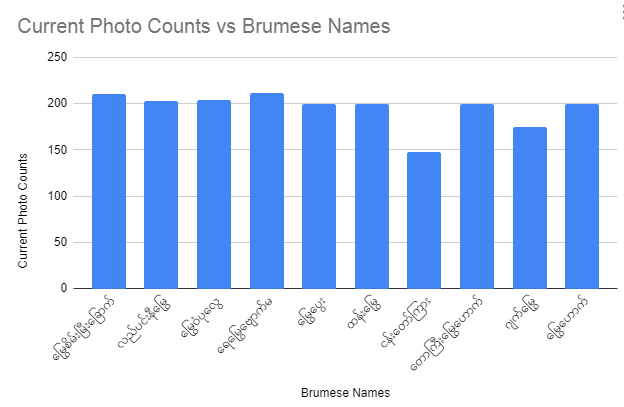

4. Dataset

Images were collected from various sources across Myanmar and curated into a single dataset

(mm-snakes-raw-images-train-test-split-3).

The dataset was split into training, test, and validation subsets as follows:

| Property | Details |

|---|---|

| Total Images | ~1,928 |

| Training Set | 1,300 images |

| Test Set | 379 images |

| Validation Set | 201 images |

| Number of Classes | 10 snake species |

5. Methodology

5.1 Version 1 — VGG19 Transfer Learning

The first iteration employed a VGG19 convolutional neural network (Simonyan & Zisserman, 2015) pre-trained on ImageNet (Deng et al., 2009) as a feature extractor, with custom dense classification layers appended. The input resolution was 300×300×3. Training proceeded in two phases:

Architecture

Input (300 × 300 × 3)

→ Data Augmentation (Flip, Rotation, Zoom)

→ Rescaling (1/255)

→ VGG19 Base (ImageNet weights, frozen → unfrozen)

→ Flatten

→ Dense(1024, ReLU)

→ Dense(1024, ReLU)

→ Dense(512, ReLU)

→ Dense(10, Linear)Training Strategy — Two Phases

| Phase | Epochs | Strategy | Train Acc | Val Acc |

|---|---|---|---|---|

| Feature Extraction | 30 | VGG19 frozen; only dense layers trained | ~80% | ~48% |

| Fine-Tuning | 20 | Full network unfrozen; LR = 1e-5 | ~91% | ~62% |

Limitation: The ~29 percentage-point train/validation gap indicated significant overfitting, motivating exploration of more powerful and modern architectures.

5.2 Version 2 — YOLOv2m-cls (Current)

Version 2 replaced VGG19 with YOLOv2m-cls

(yolo26m-cls.pt), a modern,

highly optimized classification backbone from Ultralytics (Jocher et al., 2023). The model

was trained at 640×640 resolution with Automatic Mixed Precision (AMP)

(Micikevicius et al., 2018), using ImageNet-pretrained weights.

Training Hyperparameters

| Parameter | Value |

|---|---|

| Model | yolo26m-cls.pt |

| Image Size | 640 × 640 |

| Epochs | 100 |

| Batch Size | 16 |

| Optimizer | Auto (SGD-based) |

Initial Learning Rate (lr0) | 0.01 |

Final Learning Rate (lrf) | 0.01 |

| Momentum | 0.937 |

| AMP (Mixed Precision) | Enabled |

| Pretrained Weights | Yes (ImageNet) |

| Erasing Augmentation | 0.4 |

| Flip LR | 0.5 |

| HSV Hue / Sat / Val | 0.015 / 0.7 / 0.4 |

| Close Mosaic | 10 |

| Dropout | 0.0 |

Training Command

yolo train device=2 model=yolo26m-cls.pt \

data=ul://min-si-thu/datasets/mm-snakes-raw-images-train-test-split-3 \

project=min-si-thu/mm-deepsnake \

name=experiment-1 \

epochs=100 batch=16 imgsz=6406. Experimental Setup

All v2 experiments were conducted on the Ultralytics Cloud platform using a Docker-based training environment. The hardware and software specifications are detailed below.

| Property | Details |

|---|---|

| Platform | Ultralytics Cloud (Docker) |

| GPU | NVIDIA RTX PRO 6000 |

| CPU | AMD EPYC 9655 (96-core) |

| Ultralytics Version | 8.4.15 |

| Python | 3.11.14 |

| OS | Linux (Ubuntu, glibc 2.35) |

| Total Runtime | 7 min 23 sec |

7. Results

7.1 Version Comparison

| Version | Model | Top-1 Accuracy | Top-5 Accuracy |

|---|---|---|---|

| v1 | VGG19 (fine-tuned) | ~62% | — |

| v2 | YOLOv2m-cls | 93.14% | 99.74% |

Version 2 achieved a +31.1 percentage-point improvement in Top-1 accuracy. The near-perfect Top-5 accuracy of 99.7% means the correct species appears in the model’s top-5 predictions virtually every time.

7.2 Final v2 Training Metrics (Epoch 99)

| Metric | Value |

|---|---|

| Top-1 Accuracy | 93.14% |

| Top-5 Accuracy | 99.74% |

| Training Loss | 0.00994 |

| Final Learning Rate | 1e-5 |

7.3 Technology Stack Comparison

| Component | v1 | v2 |

|---|---|---|

| Framework | TensorFlow / Keras | Ultralytics (PyTorch) |

| Base Model | VGG19 | YOLOv2m-cls |

| Image Size | 300 × 300 | 640 × 640 |

| Training Platform | Google Colab | Ultralytics Cloud |

| Hardware | CPU / Colab GPU | NVIDIA RTX PRO 6000 |

| Model Format | .h5 | TorchScript / ONNX |

8. Key Findings & Discussion

- Switching from VGG19 to YOLOv2m-cls with higher input resolution (640 vs. 300) delivered the single largest accuracy improvement in the project’s history.

- Automatic Mixed Precision (AMP) enabled fast training at minimal cost—a full 100-epoch run completed in under 8 minutes on a single RTX PRO 6000.

- A training loss of ~0.01 at epoch 99 confirms strong convergence with minimal underfitting.

- Top-5 accuracy of 99.7% makes this model highly viable for real-world deployment where a ranked shortlist of candidate species is acceptable.

9. Significance

MM DeepSnake demonstrates the rapid evolution achievable with modern YOLO-based classification architectures. Moving from a custom VGG19 pipeline at 62% accuracy to a production-ready YOLOv2 model at 93.1% Top-1 / 99.7% Top-5 accuracyshowcases how efficient, accessible, and impactful AI development can be for biodiversity and conservation applications in low-resource contexts like Myanmar.

10. Limitations

- The dataset contains approximately 1,928 images across 10 classes, which is relatively small for deep learning. Performance may degrade on under-represented species or atypical lighting conditions.

- The model does not include an out-of-distribution rejection mechanism; non-snake images may produce false positive classifications.

- Some species within Trimeresurus are grouped at the genus level due to morphological similarity, limiting species-level granularity.

- Evaluation was conducted on a held-out test set only; cross-validation and external test sets are recommended for future validation.

11. Future Work

- Deploy v2 as a mobile application or REST API for field use by wildlife researchers and the general public.

- Expand the dataset to cover additional Myanmar snake species beyond the current 10 classes. Priority additions include Ptyas mucosa, Python bivittatus, Coelognathus radiatus, and Malayopython reticulatus.

- Experiment with larger model variants (YOLOv2l-cls, YOLOv2x-cls) for further accuracy gains.

- Collect hard-negative (non-snake) images to enable out-of-distribution rejection.

- Build a Burmese-language user interface to maximize accessibility for local communities.

- Perform k-fold cross-validation and evaluate on independent external datasets for more robust performance estimation.

12. Conclusion

This report presented the development and evaluation of MM DeepSnake, an AI-powered snake species classifier for Myanmar. Through two iterative versions, the system improved from ~62% to 93.1% Top-1 accuracy by transitioning from a VGG19 transfer-learning approach to a YOLOv2m-cls classification backbone. The v2 model achieves near-perfect Top-5 accuracy (99.7%) and was trained in under 8 minutes for US$0.24, demonstrating that high-quality, domain-specific classifiers can be built efficiently with modern tools. We strongly advise that every snake bite case should be referred to professional medical personnel immediately.

Contributors

References

- Abadi, M., Barham, P., Chen, J., Chen, Z., Davis, A., Dean, J., Devin, M., Ghemawat, S., Irving, G., Isard, M., Kudlur, M., Levenberg, J., Monga, R., Moore, S., Murray, D. G., Steiner, B., Tucker, P., Vasudevan, V., Warden, P., … Zheng, X. (2016). TensorFlow: A system for large-scale machine learning. In Proceedings of the 12th USENIX Symposium on Operating Systems Design and Implementation (OSDI 16), 265–283. https://arxiv.org/abs/1605.08695

- Deng, J., Dong, W., Socher, R., Li, L.-J., Li, K., & Fei-Fei, L. (2009). ImageNet: A large-scale hierarchical image database. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 248–255. https://doi.org/10.1109/CVPR.2009.5206848

- Jocher, G., Chaurasia, A., & Qiu, J. (2023). Ultralytics YOLO (Version 8.0.0) [Computer software]. Ultralytics. https://github.com/ultralytics/ultralytics

- Micikevicius, P., Narang, S., Alben, J., Diamos, G., Elsen, E., Garcia, D., Ginsburg, B., Houston, M., Kuchaiev, O., Venkatesh, G., & Wu, H. (2018). Mixed precision training. In Proceedings of the 6th International Conference on Learning Representations (ICLR 2018). https://arxiv.org/abs/1710.03740

- Paszke, A., Gross, S., Massa, F., Lerer, A., Bradbury, J., Chanan, G., Killeen, T., Lin, Z., Gimelshein, N., Antiga, L., Desmaison, A., Köpf, A., Yang, E., DeVito, Z., Raison, M., Tejani, A., Chilamkurthy, S., Steiner, B., Fang, L., … Chintala, S. (2019). PyTorch: An imperative style, high-performance deep learning library. In Advances in Neural Information Processing Systems 32 (NeurIPS 2019). https://arxiv.org/abs/1912.01703

- Redmon, J., Divvala, S., Girshick, R., & Farhadi, A. (2016). You only look once: Unified, real-time object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 779–788. https://arxiv.org/abs/1506.02640

- Simonyan, K., & Zisserman, A. (2015). Very deep convolutional networks for large-scale image recognition. In Proceedings of the 3rd International Conference on Learning Representations (ICLR 2015). https://arxiv.org/abs/1409.1556